- calendar_today August 16, 2025

The rapid increase in energy use by artificial intelligence technologies has spurred intensive research aimed at developing more efficient computing methods. While traditional tech solutions continue to focus on small advancements in hardware and software, existing systems, quantum computing emerges as a more revolutionary technology with transformative potential.

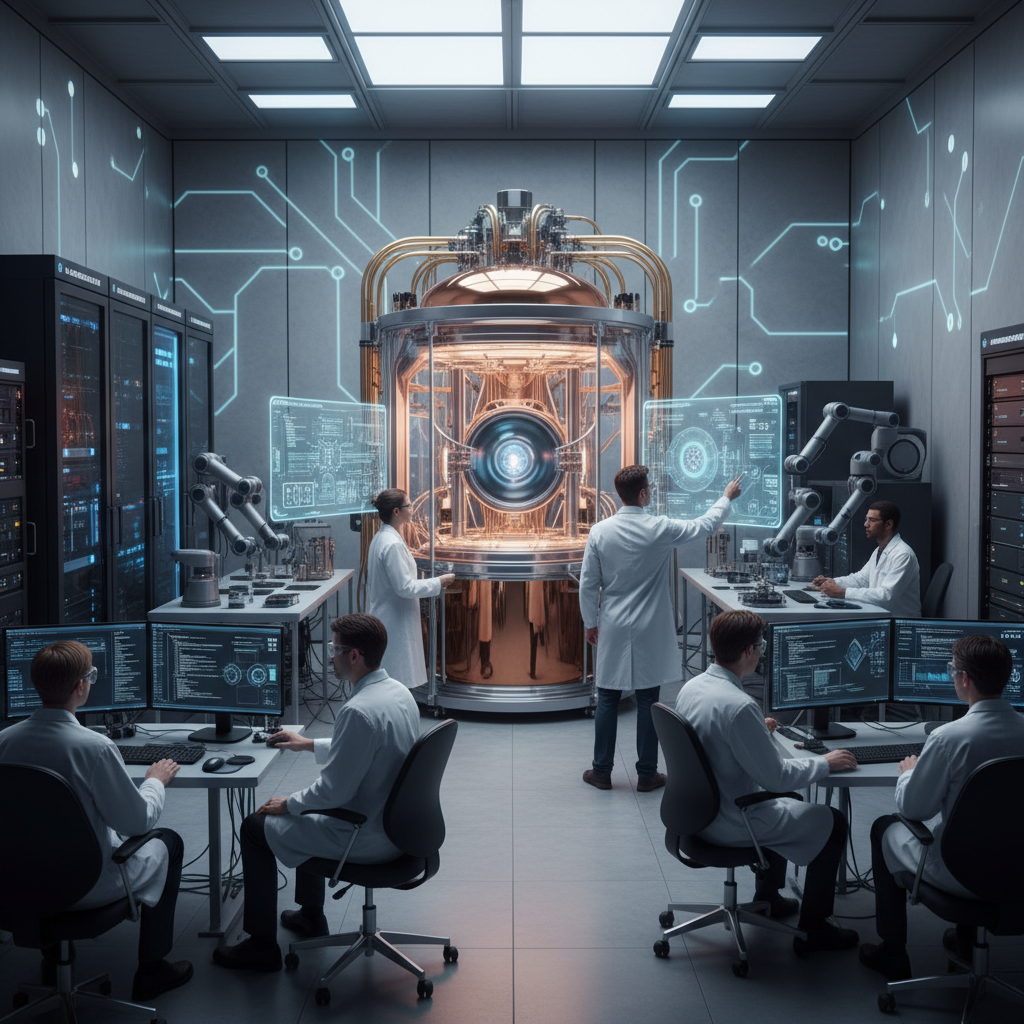

Quantum hardware provides a powerful substitute for traditional silicon-based systems because its inherent parallel processing capabilities present unique advantages for essential AI mathematical operations, especially in machine learning. The development of quantum processors remains limited by noise interference and low qubit numbers, which prevents them from handling today’s complex AI models, yet researchers actively work to prepare for a future where AI operates on quantum technology.

Commercial entities have made a substantial advancement by publishing a draft paper that shows how they moved classical image data to two different quantum processors to execute a basic AI image classification task. The success showcased in this development reveals practical reasons why quantum AI holds potential that reaches beyond theoretical possibilities.

The field of AI covers various machine learning techniques, which parallel the diverse ways quantum computing can be applied to AI. Some advantages lie purely in mathematical efficiency. Quantum computers theoretically provide significant speed advantages in matrix operations, which are the foundation of many machine learning algorithms. An extensive analysis identifies multiple pathways through which quantum hardware stands to transform machine learning practices.

The relationship between quantum hardware and artificial intelligence reaches further than simple computational speed enhancements. The inability to integrate processing units and memory together presents a major obstacle when running complex AI models such as neural networks on classical hardware systems. The need for continuous data movement between components results in reduced computational speed. Quantum computers address this problem because they minimize the need for data transfer between processing units and memory. Qubits directly encode data while computations happen through specific manipulations called gates.

Existing studies show that quantum systems can surpass classical systems in supervised machine learning tasks even when initial data is stored on classical hardware. The described machine learning approach makes use of variational quantum circuits to perform its computations. Two-qubit gate operations within these circuits receive control from a variable factor that exists on the classical side before being transmitted through control signals to manipulate the qubits. The mechanism operates with similarities to neuron communication in neural networks because the two-qubit gate operation functions as information transfer, while the variable factor works like a signal weight.

The architecture examined by a joint team from the Honda Research Institute together with Blue Qubit represents their main area of study. The latest research by the team concentrated on the vital task of converting classical data into quantum formats for subsequent processing and analysis. The team advanced their research through practical tests of their data encoding and classification techniques on two separate quantum processors.

The research team decided to work on a basic image classification task. The Honda Scenes dataset provided them with raw data, which included images taken across 80 driving hours in Northern California, and each image was tagged with specific contextual information. The specific question they aimed to answer using quantum machine learning was a binary one: Does the scene depicted contain snow?

The complete image dataset was kept on standard classical computer systems. The images required a transformation into quantum information to perform classification tasks on quantum hardware. The research team tested three varied data encoding techniques by adjusting both the segmentation of image pixels and the qubits assigned to those segments. A classical simulator of a quantum processor was utilized to conduct the training phase, which established the optimal parameters for two-qubit gate operations.

The researchers implemented their trained models on two different quantum processors, which demonstrated contrasting capabilities. IBM offers a quantum processor with a notable 156 qubits, which demonstrates a marginally elevated error rate during gate operations. Quantinuum’s second quantum processor stands out due to its very low error rate, though it contains fewer qubits, totaling 56. Classification accuracy showed improvement when researchers increased either the number of qubits or the number of gate operations performed.

The system performed to functional standards because its accuracy rates went well beyond expected levels from random guessing. The classification accuracy attained by this system remained inferior compared to that obtained from standard algorithms executed on traditional hardware. This underscores the current reality: Present-day quantum hardware lacks the required qubit scalability and sufficiently low error rates that would enable it to surpass classical systems in real-world AI applications.

The investigation clearly demonstrates that real-world quantum hardware today can successfully perform AI algorithms that researchers have long hypothesized about. Those who wish to use quantum computing for practical applications must wait for future hardware improvements just like everyone else in this field. The latest research provides a powerful preview of a future where quantum AI transitions from theoretical potential to practical implementation.